OpenAI releases GPT-5.5, claims it can navigate your messy prompts

OpenAI launched GPT-5.5 and aside from gains in the usual benchmarks, the company is claiming it can figure out your messy prompts and directions.

According to OpenAI, GPT-5.5 "understands what you’re trying to do faster and can carry more of the work itself…Instead of carefully managing every step, you can give GPT‑5.5 a messy, multi-part task and trust it to plan, use tools, check its work, navigate through ambiguity, and keep going."

OpenAI said the primary use cases for GPT-5.5 are coding, computer use, knowledge work and early scientific research.

In addition, OpenAI said the boost in intelligence with GPT-5.5 doesn't blow your token budget and holds the efficiency line set by GPT-5.4. "GPT‑5.5 matches GPT‑5.4 per-token latency in real-world serving, while performing at a much higher level of intelligence. It also uses significantly fewer tokens to complete the same Codex tasks, making it more efficient as well as more capable," said OpenAI.

GPT-5.5 also has a Pro version. Both will roll out to subscription customers and land in API deployments soon.

For OpenAI, GPT-5.5 is an incremental yet important launch given Anthropic just launched Opus 4.7 and Google is likely to roll out its next-generation models at Google I/O.

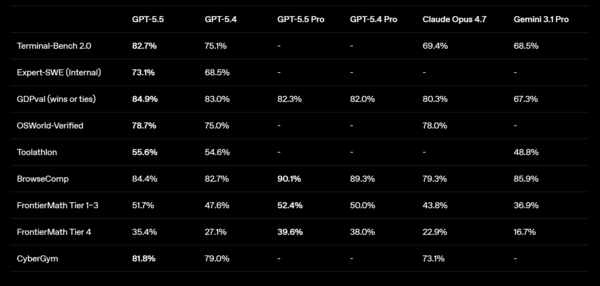

Here's a look at the benchmarks.

Key points:

- GPT-5.5 improves on GPT-5.4 for generating documents, spreadsheets and slides.

- If GPT-5.5 can deliver on what OpenAI is promising it should be more intuitive and connect dots in what you’re asking. The downside is that it may make mistakes because you're not being clear.

- The GPT-5.5 release appears to be a key step in making Codex more useful and part of everyday workflows.

- OpenAI said GPT-5.5 represents a new approach for inference. GPT‑5.5 was co-designed for, trained with, and served on NVIDIA GB200 and GB300 NVL72 systems. Codex and GPT‑5.5 were instrumental in how we achieved our performance targets.