Nvidia GTC 2026: We're a software company too

Nvidia is known for its GPUs and now CPUs and LPUs and rack systems designed to power AI agents well into the future. But it's becoming increasingly clear that Nvidia is going to become the operating system for AI workloads.

CEO Jensen Huang loves his toys and the GTC 2026 keynote included plenty of them. But don't take your eye off the road. We're headed to Nvidia as software company. Huang talked up the 20-year anniversary of CUDA, which is an example of Nvidia's software fortress. "With CUDA, we support the entire every single phase of the AI lifecycle. We address every single data processing platform. We accelerate scientific principle solvers of all different kinds. And so the application reach is so great that once you install NVIDIA GPUs, the useful life of it is incredibly high," said Huang.

Not every software platform is as big as CUDA, Nvidia continues to update applications, platforms and libraries continually. "We're announcing 170 libraries, maybe 40 models, and that's just at the show. We're updating these all the time. The libraries is the crown jewels of our company," said Huang.

Here's a roundup of the software related news.

GTC 2026 coverage:

- Nvidia GTC 2026: AI inference fueling demand boom, $1 trillion of order flow

- Nvidia GTC 2026: Nvidia’s hardware strategy goes beyond GPU in AI inference pivot

- Where’s tokenomics for the rest of us?

- Event Report: Nvidia GTC Kicks Off - All Eyes on AI Future

- Nvidia Nemotron: Much needed open-source model champion in US

- Nvidia's Huang: All software will be agentic

- Constellation ShortList™ AI Factory Providers

Dynamo 1.0

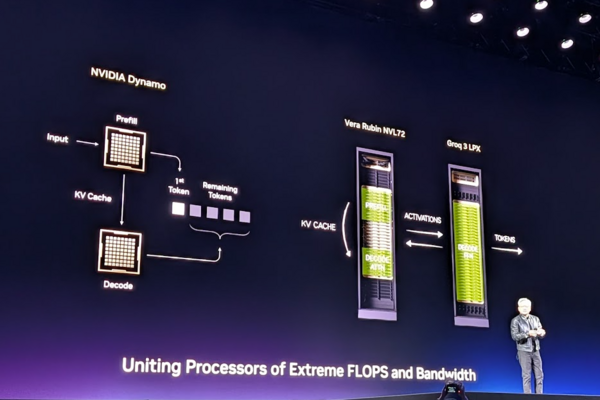

Nvidia announced the launch of Dynamo 1.0, an open source distributed "operating system" for AI factories. The platform is built to orchestrate GPU and memory resources across clusters, specifically targeting generative and agentic inference.

According to Nvidia, Dynamo has boosted inference performance of Nvidia Blackwell GPUs by up to 7x. Nvidia is integrating Dynamo and TensorRT-LLM optimizations natively into open-source frameworks, including LangChain, Ilm-d, LMCache, SGLang, and vLLM.

The inference platform is already being integrated by the AI-focused cloud providers like CoreWeave and Nebius as well as AWS, Microsoft Azure, Google Cloud and Oracle Cloud Infrastructure.

Dynamo was how Nvidia bridged Groq chips with Vera Rubin.

NemoClaw and agents

Nvidia launched a project called NemoClaw, which aims to replicate Open Claw for the enterprise. Nemo Claw is focused on self-evolving and trustworthy agents. The idea is to leverage Nemo Claw to build and operate agentic systems.

Nvidia also launched an Agent Toolkit with open models, runtimes and blueprints for building autonomous agents with components like Nemo, Tron and Dynamo.

AI factory optimization

The Vera Rubin DSX AI Factory Reference Design includes a set of modular applications including:

- DSX Max-Q, which maximizes token performance per watt within a fixed power budget.

- DSX Flex, which connects AI factories to power-grid services to manage demand.

- DSX Exchange, for secure integration of IT and operational technology signals.

- DSX Sim, which validates designs through high-fidelity digital twins.

A parade of open models

Nvidia is the US champion of open-source AI models and expanded its roster at GTC 2026.

The company announced an expansion of its open model families to power the next generation of agentic, physical, and healthcare AI.

- Nemotron 3 Ultra: Delivers frontier-level intelligence with high throughput efficiency.

- Nemotron 3 Omni: Integrates language, vision, and audio to extract insights from documents and videos.

- Nemotron 3 VoiceChat: Enables real-time conversational capabilities.

- Safety and Datasets: New safety models improve trust, while "Nemotron-Personas" provides privacy-preserving synthetic datasets.

On the physical AI front, Nvidia announced the following:

- Nvidia Cosmos 3: Unifies synthetic world generation and physical AI reasoning.

- Nvidia Isaac GROOT N1.7: An open vision-language-action (VLA) model for humanoids.

- Nvidia Alpamayo 1.5: A reasoning VLA model for autonomous vehicles.

- GROOT N2: Nvidia reviewed this next-generation robot foundation model, which is slated for release by the end of the year.

For healthcare, Nvidia outlined its BioNeMo additions with Proteina-Complexa, a generative model used for protein binder design and drug discovery. Nvidia said it is adding 1.7 million high-confidence predictions to the AlphaFold Protein Structure Database in conjunction with various partners.

The Nemotron Coalition

Black Forest Labs, Cursor, LangChain, Mistral AI, Perplexity, Reflection AI, Sarvam and Thinking Machines Lab are inaugural members of the Nemotron Coalition. The group plans to advance open-source foundational models.

Key goals of the Nemotron Coalition include:

- Members will work together to build open models trained on Nvidia DGX Cloud, which will then be open sourced for customization by sector.

- Build Nemotron 4, a base model co-developed by Mistral AI and Nvidia. Nemotron 4 will spawn a family of models.

- The group's remit is to promote transparency, collaboration, and sovereignty, ensuring that AI intelligence is accessible and not controlled by a single entity.

Autonomous vehicles

Nvidia announced the expansion of its DRIVE Hyperion platform to support the development and deployment of Level 4 (L4) autonomous vehicles.

Partnerships for the DRIVE platform include BYD, Geely, Isuzu and Nissan as well as Bolt, Grab and Lyft.

Industrial AI

Cadence, Dassault Systèmes, PTC, Siemens and Synopsys are building on CUDA-X and Omniverse to create a bevy of AI agents and industrial applications