Google Cloud Invests In Data Services and ML/AI, Scales Business

Former Vice President and Principal Analyst

Constellation Research

Doug Henschen is former Vice President and Principal Analyst where he focused on data-driven decision making. Henschen’s Data-to-Decisions research examines how organizations employ data analysis to reimagine their business models and gain a deeper understanding of their customers. Henschen's research acknowledges the fact that innovative applications of data analysis requires a multi-disciplinary approach starting with information and orchestration technologies, continuing through business intelligence, data-visualization, and analytics, and moving into NoSQL and big-data analysis, third-party data enrichment, and decision-management technologies.

Insight-driven business models are of interest to the entire C-suite, but most particularly chief executive officers, chief digital officers,…...

Read more

Google Cloud is adding must-have enterprise features and scaling the business to meet data platform, machine learning and AI demand. Here’s a progress report.

Google’s reputation for big data, machine learning and artificial intelligence innovation is richly deserved. The challenge for Google Cloud – which exposes that innovation to enterprises through the Google Cloud Platform (GCP) – isn’t scaling the technology so much as scaling the business.

Last week’s Google Cloud Next '17 event in San Francisco was a case in point. The event was sold out, with more than 10,000 attendees packing the Moscone Center’s West hall. Many conference sessions were overbooked and had long standby lines. You got the feeling Google could have easily filled another massive conference hall.

Google Cloud Next 17 Recap from Constellation Research on Vimeo.

So what is Google Cloud doing to meet fast-growing demand? For starters, parent company Alphabet Inc. is investing big money in the business — more than $30 billion, according to Chairman Eric Schmidt, a keynoter at the event. But Google Cloud is not only hiring on all fronts and expanding the ecosystem, it’s also doubling down on tech investments in data centers and network improvements; new big data, machine learning (ML) and artificial intelligence (AI) services; and enterprise-oriented migration, administrative, governance and security features. Here’s a closer look at the business- and data-tech-oriented announcements.

Business-Side Investments

You may get started on a public cloud through clicks and credit card payments, but it takes lots of people-centric interaction and hand holding to move an entire business into the cloud as scale. That’s why Google Cloud saw the largest headcount increase of any Alphabet business in 2016, and it expects to add at least 1,000 more salespeople in the first six months of this year. What’s more, Google Cloud’s professional services team has seen 5X growth over the last year, and partnerships with systems integrators and resellers are quickly multiplying.

At Google Cloud Next, Diane Greene, senior VP of Google Cloud, announced a significant new partnership with SAP, adding to a growing list of enterprise software partners. SAP executive board member Bernd Leukert joined Greene on stage to highlight certification of SAP Hana as well as plans for joint work on enterprise-grade data governance and compliance in the cloud. The more such partnerships Google can forge with blue-chip enterprise software vendors, the better.

Of course, the big draw for many companies to Google is its expertise in ML and AI. This point was validated onstage at Google Next by C-level customer guests from Colgate-Palmolive, Disney, Home Depot, HSBC and Verizon. And bolstering the company’s case, Google Cloud Chief Scientist Fei-Fei Li announced the acquisition of Kaggle, a company that has been a magnet to more than 850,000 data scientists through its famed Kaggle competitions.

Bringing Kaggle inside the Google Cloud ecosystem “combines the world’s largest data science community with the world’s most powerful machine learning cloud,” wrote Kaggle CEO Anthony Goldbloom. It also gives Kaggle and all those data scientists access to (and, it’s hoped, familiarity and comfort using) Google Cloud infrastructure, scalable training and deployment services and the ability to store and query large data sets.

MyPOV on business-side investments: Google Cloud clearly has big momentum. But whatever it’s investing in people and customer support, the company could probably double the effort and still not match the scale of its chief rival, Amazon Web Services (AWS). Amazon does not break out AWS headcount from its far more labor-intensive retail business, so it’s not an apples-to-apples comparison, but Amazon is many times larger than Google, with nearly 350,000 employees and plans to hire more than 100,000 more full-time employees over the next 18 months.

Suffice it to say that Google Cloud needs to stay aggressive on workforce expansion. My sense is that it’s growing as fast as it can without creating the sort of internal chaos that could negatively impact customer experience. Partnerships with systems integrators and high-scale vendors like SAP and acquisitions such as the Kaggle deal are smart ways to grow the ecosystem without putting all the pressure on internal development.

Data-Platform Investments

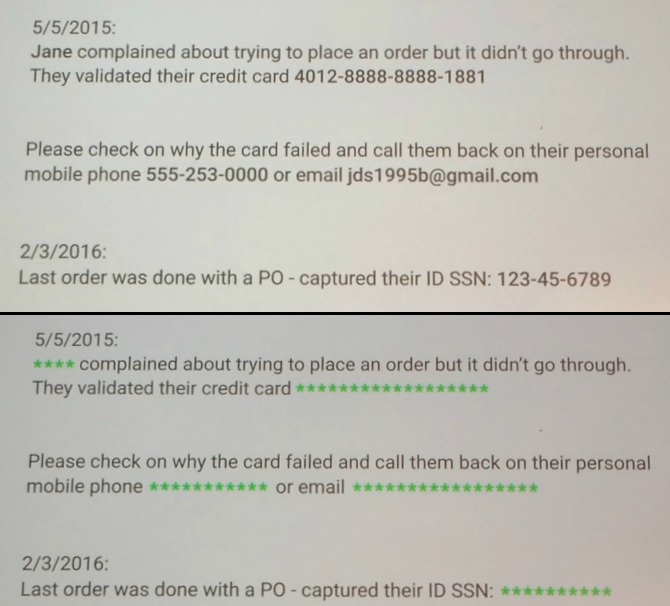

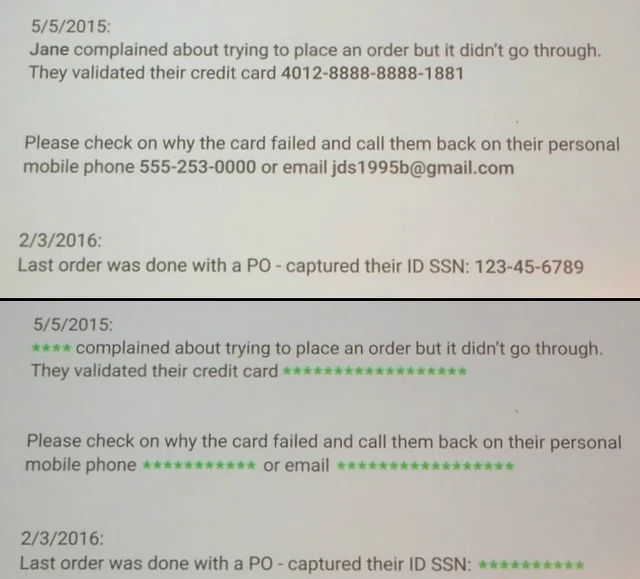

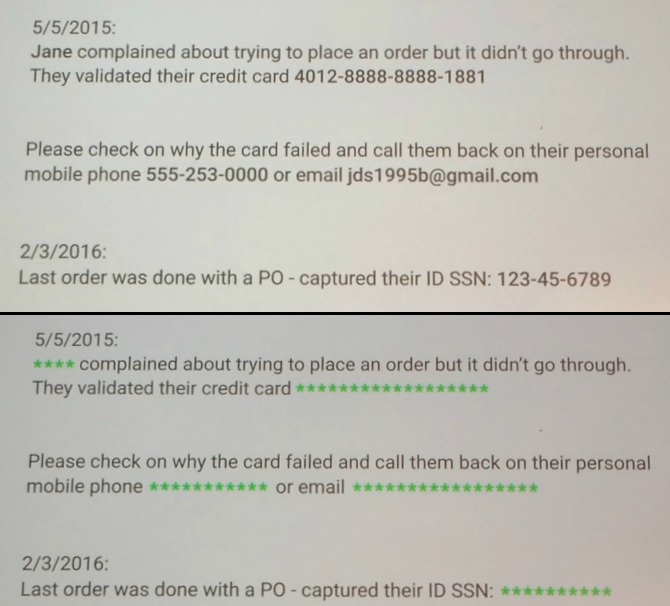

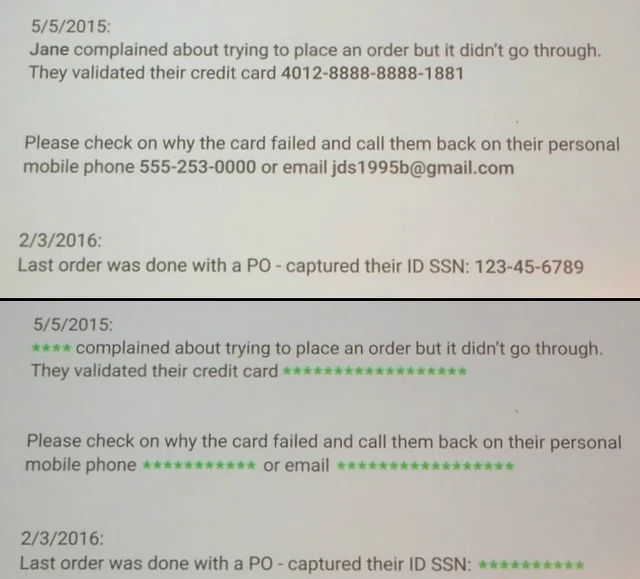

Google is investing on many technology fronts, but my focus is on data platforms, ML and AI, so I’m not going to get into the details of the three new data center regions, app developer news or the G Suite announcements (see Alan Lepofsky’s blog). There were also many infrastructure and security announcements, but the one most relevant to my data-to-decisions research is the new Data Loss Prevention (DLP) API. Now in beta, the DLP API promises to automatically discover, classify and redact sensitive information such as Social Security numbers, credit card numbers, phone numbers and more.

Together with security key and encryption developments, Google is addressing cloud security concerns that persist no matter how many times actual breaches show conventional corporate data centers to be far more vulnerable than clouds.

Google Cloud’s Data Loss Prevention API, now in beta, is designed to automatically discover, classify and redact sensitive data, as shown above.

As for those data platform, ML and AI announcements, here’s a rundown of the highlights:

Cloud ML Engine goes GA. This managed machine learning service based on Google’s open sourced TensorFlow ML framework is now generally available. The service features automatic hyperparameter tuning and tools for job management and resource utilization. In beta are GPU-based training and online prediction, which promise state-of-the-art performance at scale.

Cloud Video Intelligence API. Now in private beta, this service is designed to search and automatically tag large libraries of videos. The service spots entities within videos and notes the timecode of their appearance, making sense of videos and large collections of videos in automated fashion. Developers need no knowledge of machine learning or deep learning, says Google. They simply invoke the API.

Cloud Dataprep. This service, now in beta, promises to bring self-service data preparation to structured and unstructured information. The no-code, point-and-click interface provides a guided approach to joining data and automated data-transformation suggestions.

Google BigQuery Data Transfer Service. Also in beta, this managed data-import service will launch with support for moving data from DoubleClick, Google AdWords and YouTube, but Google Cloud execs say they’re keen on providing managed, automated migration options from other high-volume data sources. Stay tuned.

Cloud SQL for PostgreSQL. This managed service, now in beta, supports the popular, enterprise-standard, open-source relational database, opening the door for a world of compatible enterprise applications.

MyPOV on the tech announcements: Google Next announcements were heavy on betas and light on general releases, although the company less than a month ago announced the GA of its Cloud Spanner service. Spanner is Google’s globally distributed relational database service. It’s unique (and distinguished from global NoSQL database services) in offering consistency for demanding financial services, advertising, retail, supply chain and other applications demanding synchronous replication.

Aside from its big data, ML and AI depth, another differentiator for Google Cloud is the open-source nature of Google Cloud Platform services. Cloud Dataproc, for example, is based on Apache Spark and Apache Hadoop, and the batch-and-stream-data processing capabilities of Cloud Dataflow were open sourced as Apache Beam. This means you could run all these technologies on premises, in other clouds or in hybrid scenarios, diminishing vendor lock-in. It’s a contrast with AWS services that are unique to that vendor, although I don’t recall any customers discussing actual hybrid deployments or moving between cloud and on-premises deployments.

The more practical and real differentiator with Google Cloud Platform – and the one that multiple customer at Google Cloud Next actually talked about — is the managed nature of its services. With BigQuery, for example, you load data and issue queries and Google automatically makes all the necessary moves to ensure adequate compute and storage capacity. With unmanaged cloud services you have to think about, plan and take care of all the provisioning. And if you get it wrong, you either overpay for capacity or suffer from poor performance. This has been Google Cloud’s ace in the hole, but it’s a card the vendor is now playing up with every customer and at every opportunity.

Related Reading:

Cloud BI and Analytics Options Aren’t Just for Cloud Data

AWS Analytic and AI Services Are No Surprise, But They Will Succeed

Strata + Hadoop World Highlights Long-Term Bets on Cloud

Data to Decisions

Digital Safety, Privacy & Cybersecurity

Innovation & Product-led Growth

Tech Optimization

Future of Work

Next-Generation Customer Experience

Google Cloud

Google

SaaS

PaaS

IaaS

Cloud

Digital Transformation

Disruptive Technology

Enterprise IT

Enterprise Acceleration

Enterprise Software

Next Gen Apps

IoT

Blockchain

CRM

ERP

CCaaS

UCaaS

Collaboration

Enterprise Service

AI

ML

Machine Learning

LLMs

Agentic AI

Generative AI

Robotics

Analytics

Automation

Quantum Computing

developer

Metaverse

VR

Healthcare

Supply Chain

Leadership

business

Marketing

finance

Customer Service

Content Management

Chief Customer Officer

Chief Information Officer

Chief Marketing Officer

Chief Digital Officer

Chief Technology Officer

Chief Information Security Officer

Chief Data Officer

Chief Executive Officer

Chief AI Officer

Chief Analytics Officer

Chief Product Officer

Data to Decisions

Digital Safety, Privacy & Cybersecurity

Innovation & Product-led Growth

Tech Optimization

Future of Work

Next-Generation Customer Experience

Google Cloud

Google

SaaS

PaaS

IaaS

Cloud

Digital Transformation

Disruptive Technology

Enterprise IT

Enterprise Acceleration

Enterprise Software

Next Gen Apps

IoT

Blockchain

CRM

ERP

CCaaS

UCaaS

Collaboration

Enterprise Service

AI

ML

Machine Learning

LLMs

Agentic AI

Generative AI

Robotics

Analytics

Automation

Quantum Computing

developer

Metaverse

VR

Healthcare

Supply Chain

Leadership

business

Marketing

finance

Customer Service

Content Management

Chief Customer Officer

Chief Information Officer

Chief Marketing Officer

Chief Digital Officer

Chief Technology Officer

Chief Information Security Officer

Chief Data Officer

Chief Executive Officer

Chief AI Officer

Chief Analytics Officer

Chief Product Officer