Personal Observations to the IT department on Digital Business, IoT & AI

Vice President and Principal Analyst, Constellation Research

Constellation Research

Andy Mulholland is Vice President and Principal Analyst focusing on cloud business models. Formerly the Global Chief Technology Officer for the Capgemini Group from 2001 to 2011, Mulholland successfully led the organization through a period of mass disruption. Mulholland brings this experience to Constellation’s clients seeking to understand how Digital Business models will be built and deployed in conjunction with existing IT systems.

Coverage Areas

Consumerization of IT & The New C-Suite: BYOD,

Internet of Things, IoT, technology and business use

Previous experience:

Mulholland co authored four major books that chronicled the change and its impact on Enterprises starting in 2006 with the well-recognised book ‘Mashup Corporations’ with Chris Thomas of Intel. This was followed in…...

Read more

I rarely write a blog based on personal opinion as my engineering background always favors researched factual reporting. Opinion pieces are, by necessity, based on subjective views; to have value must be insightful. Having prepared and published research presenting the ‘Big Picture’ view of Digital Enterprises functioning in the Digital Economy it became all to clear while individual technologies might be understood by IT; the deployment into Digital Business was not understood.

In fact the alignment between IT and Business management in the deployment of IoT as a core element in a Digital Enterprise was somewhere between absent, and poor. Industrial companies with Operational Technology departments had moved swiftly forward, usually with limited reference to the Information Technology operation. This blog provides a simple summary of the main points, in my opinion, that IT Professionals have to understand to see the ‘Big Picture’ of Digital Business and how it will transform their Enterprise.

As the above is controversial, but easy to prove with evidence, I am going to start with an explanation as to why researching a Big Picture report gives a different view to reporting on particular elements in a specialized manner. Why and how can the work produce some insightful views in addition to the factual report itself.

An Analyst interacts with many different people, with a wide range of roles, experiences and employers to build the ‘big picture’ view across an entire market spanning products, companies and deployments. Each discussion revolves round in depth microcosm that is one part of the whole picture; in a mature market the integration to the whole is part of the discussion.

In an immature market where both the Business deployment and the Technology products are still rapidly emerging the degree of consensus in the views is a critical issue. The concepts and practices of ‘Digital’ make for not just a big Picture, but for the entire redesign of Business, and the enabling Technology Frameworks into a new inclusive Digital Economy over the coming years.

A great deal of research, with the active cooperation of vendors and users was required to produce the final report, which can be found here as an abbreviated set of Blogs under the title Distributed Business and Technology models. (The title aims to be ‘neutral and inclusive’ avoiding terms with strong individual definitions). The resulting report defines the aspirational target for both users planning their business/technology adoption strategy, and vendors their product/services features development.

Most recognized markets whether discussing business, or technology, have usually a reasonable match between Business user expectations and Technology vendors’ product capabilities. Sadly in Digital Business this is not so, there are serious mismatches between Business Managers, those Deploying and the Vendors of the Technology. The goal of this blog is to identify what I believe are some of the biggest gaps, or issues that need to be addressed.

Currently both the Business management and the Technology Management are simultaneous drivers of deployment, but for completely different benefits and reasons. The visions of Digital Business is all to often remote from those of the IT department. In manufacturing, or certain industry sectors like Buildings, or Medical, the presence of Operational Technology departments has helped to overcome the ‘gap’ and resulted in these sectors accelerating rates of adopting the new practices.

Cloud is an excellent example of this; the Information Technology department will be advanced in reducing cost through using centralized large-scale data centers whilst Business Management and Operational Technology see Cloud as the technology that supports the distributed low latency edge computing requirements of IoT in a Digital Business.

Sadly much of the following comments seem to target the IT department, and its need to come to terms with a Business and Technology transformation of a type last experienced in the early 90s that lead to the creation of the Enterprise IT department. The following comments assume that IT professionals and departments are keen to identify their new, or additional, role in the Digital Enterprise.

After twenty-five years of Client-Server based Enterprise IT driven by the Close Coupled State full architecture model a radical technology change to an all-together different Business model using a Loose Coupled, Stateless architecture is radical. After all the current architecture has proved able to be adjusted to accommodate the inclusion of the Internet, Web, Mobility, and Clouds, so why not IoT, and AI, as well?

The following, gathered from a wide range of sources, are my opinion the key points where misunderstandings around the Digital Business model and its enablement with Technology are most common. And it starts with the fundamental question of what is Digital Business.

Digital Business, the Digital Economy, and similar terms that are widely used, often interchangeably, are not really understood as to their real definition, and are seen as part of the current Internet/Web economy. The prevailing view is Digital Business relates to an extension of the current model, with added new technology capabilities to support increased volumes of business. There is widespread failure to really grasp that Digital in this context refers to a new generation of Commerce that changes Business models, and Enterprise organizational structures.

The enabling technologies for the Digital Enterprise that make up CAAST, (Clouds, Apps, AI, Services & Things), even if bearing a familiar name such as Cloud, are deployed in a different approach to enable Digital Business. Digital Business deploys these technologies to optimize continuous changes in opportunities to do business that will gain increased revenues and margin improvements. Digital Business is more than a Front Office activity running through out the Enterprise Business and Operating model.

The contrast with the current role of IT, as a predominantly Back Office activity providing the administrative functions necessary to record transactions through stable processes could not be greater. Information Technology needs to grasp the difference between their current roles, and understand Operation Technology learning from the leadership this discipline has already showed operating ‘real-time’ event optimization. The role of IT is necessary for compliance, and will continue, but is likely to become subordinate, even increasingly outsourced, as attention turns to investing in Digital Business.

IoT is all too often either seen as a consumer wave, or as interesting way to add more data to current IT processes. The Digitized representation of the Physical World, by using IoT, to allow assets and events, to be ‘read’ and create dynamic optimized ‘react’ is at the heart of Digital Business. IoT should be understood as a group of technologies that create the crucial digital data to extend the use of computers into new areas of the Enterprise Business operating model.

Business Managers have come to realize that continuous innovation and dynamic optimization requires the decentralization in Enterprise operations that is a core feature of Digital Business models. After years of using IT to support ever more centralized business activities and processes this is counter intuitive to many IT professional who fear for the ‘State full’ synchronization of Enterprise data.

The IT role is to manage the integration of Systems in known relationships, Close Coupled, to maintain the single version of the truth, state full, data model. Digital Business requires a new role to ensure whatever Device can communicate whenever it needs, Loose Coupled, and align data flow to activities in a Stateless Model.

The Loose Coupled, Stateless nature of Digital Business coupled to the translation of the physical World into Digital Models, massively increases the volumes of data to be ‘read’. Simultaneously the time available to ‘respond’ in order to be able to influence the outcome is decreased thereby making the introduction of automation necessary.

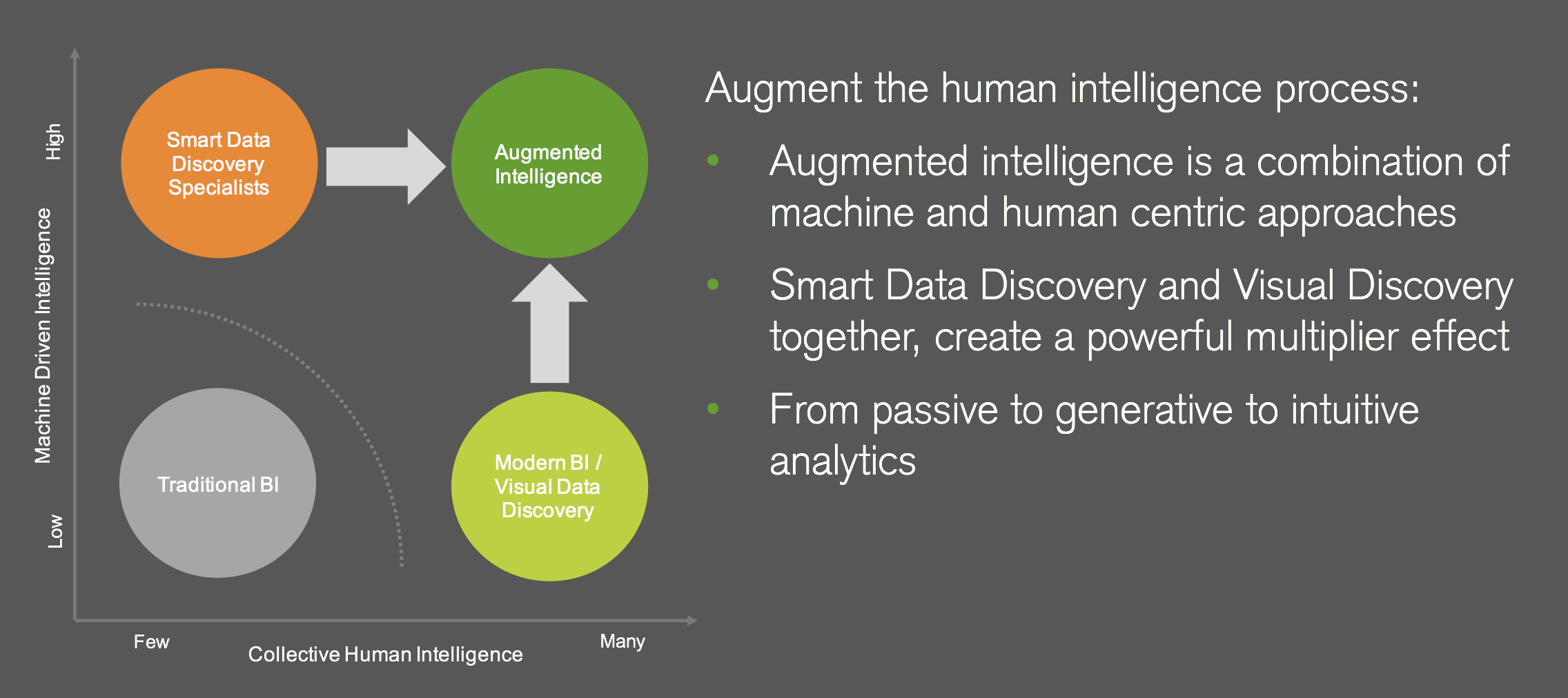

Adopting Digital Business by Technology correctly creates the environment in which to deploy AI, (standing for Augmented Intelligence). As with Digital Business versus current online Business AI is not Analytics and BI taken to the next level, it is a new approach requiring an investment in time to understand how to deliver the ‘augmentation’ human capacity to manage the vast increases in data and decisions Digital Business brings.

In summary IT departments cannot muddle through and expect that as before they will be able to assimilate and adopt this latest round of technology change. Technology professionals as ever will be in great demand to deliver what Business requires. Currently IT professionals in IT departments need to conduct serious strategic knowledge building exercises.

Equipped with this knowledge the IT department becomes able to fulfill a wider role, perhaps in conjunction with the existing Operation Technology department, and certainly to bring Technology skills to the Enterprise Business strategy.

Interesting Links

1) ASUG, (Association of SAP User Groups) has had a long running educational program in the form of Webinars given by a range of Experts with Case Studies. The views are not limited to, or constrained by SAP and its products, and offer distinctly practical advice. The current series can be found at https://www.asug.com/news/saps-journey-to-the-cloud-and-you-part-i

2) The Internet of Things World – Europe event provides an illustration of the scale of IoT to Digital Business adoption in the numbers of speakers from many World-class enterprises, but also demonstrates the almost total absence of IT departments and professionals from the program. See speaker lists and the program details here

New C-Suite

Innovation & Product-led Growth

Tech Optimization

Future of Work

AI

ML

Machine Learning

LLMs

Agentic AI

Generative AI

Analytics

Automation

B2B

B2C

CX

EX

Employee Experience

HR

HCM

business

Marketing

SaaS

PaaS

IaaS

Supply Chain

Growth

Cloud

Digital Transformation

Disruptive Technology

eCommerce

Enterprise IT

Enterprise Acceleration

Enterprise Software

Next Gen Apps

IoT

Blockchain

CRM

ERP

Leadership

finance

Customer Service

Content Management

Collaboration

M&A

Enterprise Service

Chief Information Officer

Chief Technology Officer

Chief Digital Officer

Chief Data Officer

Chief Analytics Officer

Chief Information Security Officer

Chief Executive Officer

Chief Operating Officer