Salesforce Dreamforce 2017: 4 Next Steps for Einstein

Salesforce Dreamforce 2017: 4 Next Steps for Einstein

Salesforce Einstein Prediction Builder, Bots, Data Insights and a new data-explorer feature stand out as the big AI and analytics announcements. Here’s what they’ll do for your business.

To Salesforce customer Bill Hoffman, Chief Analytics Officer at Minneapolis-based US Bank, the “A” in “AI” is about “augmented” intelligence because, as he said in a keynote at this week’s Dreamforce event in San Francisco, “there’s nothing artificial about it.”

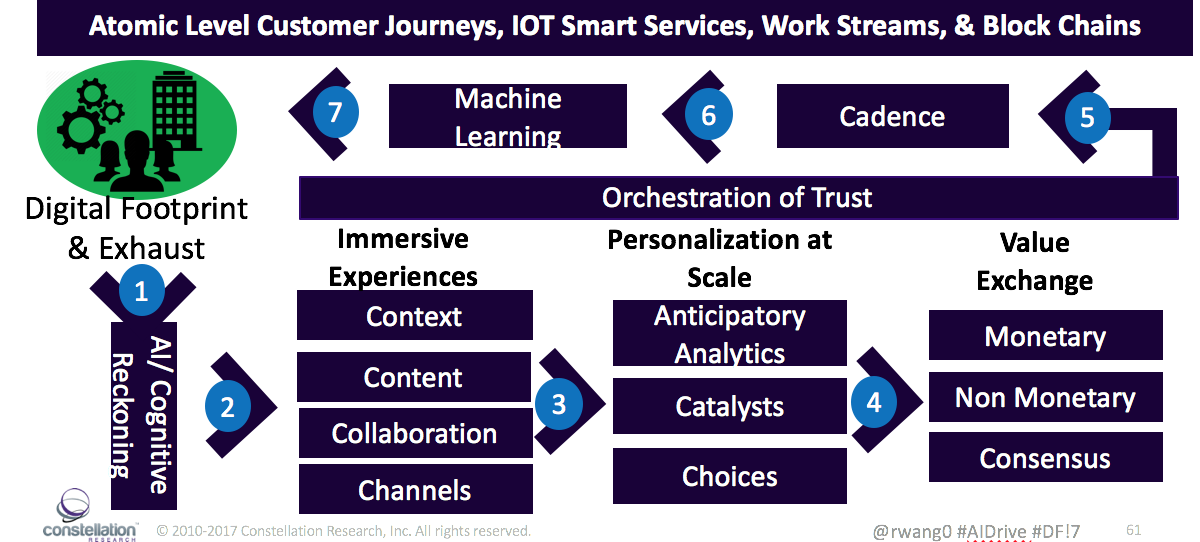

US Bank has deployed Salesforce Einstein capabilities including Predictive Lead Scoring and Einstein Analytics (formerly known as Wave) for customer attrition analysis and retention efforts. It's also using Einstein Discovery (formerly BeyondCore) to better understand customer behavior and cross-sell opportunities. The bank expects to roll out Einstein capabilities to more than 2,000 of its customer-facing financial advisers across the firm in hopes of “personalizing service at scale” and “creating a differentiated customer experience,” Hoffman said.

Personalizing at scale is precisely the idea behind two “myEinstein” capabilities announced at Dreamforce. Also announced were two Einstein Analytics capabilities. All four capabilities are coming to the portfolio next year. Here’s what they promise to do for your business.

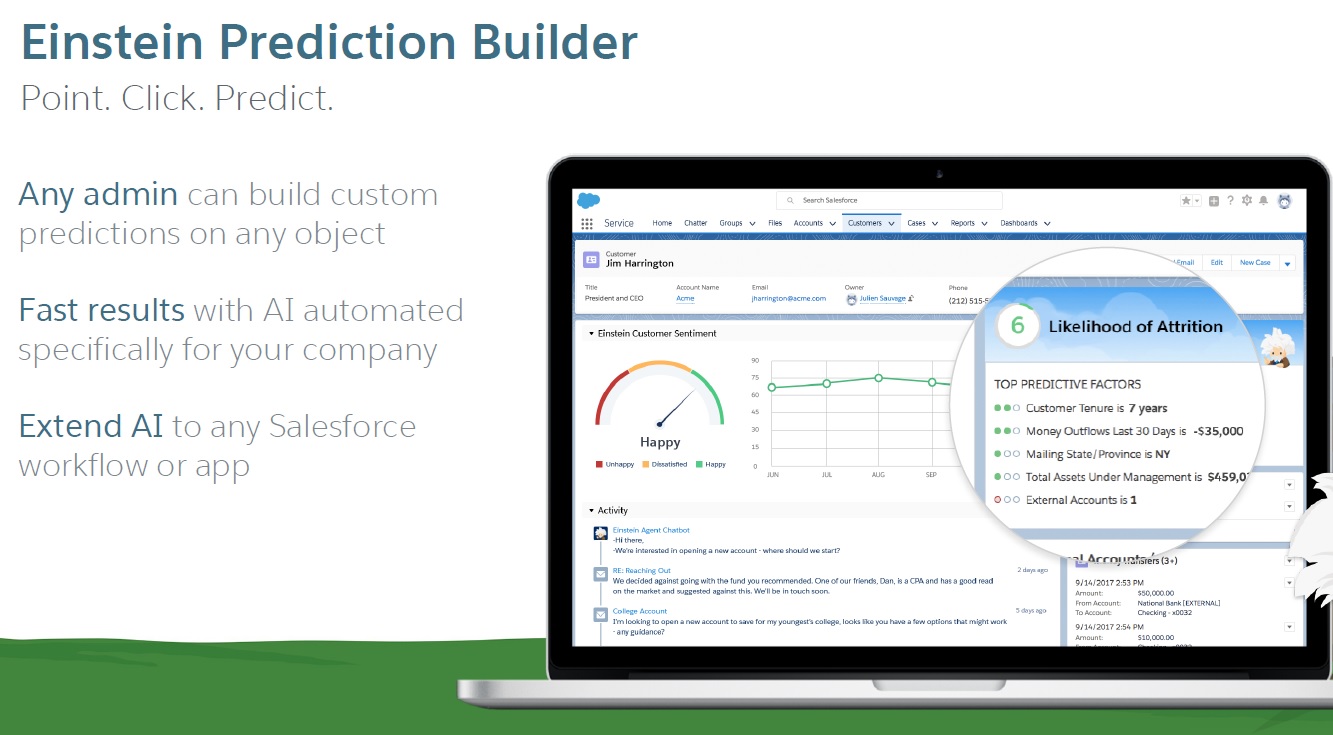

Einstein Prediction Builder: Plenty of Salesforce customers are using or considering machine-learning-based Einstein capabilities, most of which were detailed in my 19-page report published earlier this year. But at Dreamforce 2017 we heard the revealing stat that some 80% of the customer data in Salesforce is tied to custom (customer-defined) fields and objects. No surprise, then, that the number-one ask among Salesforce customers was for customizable, as well as pre-built, Einstein insights, predictions and recommendations.

Einstein Prediction Builder is a no-code capability designed to enable non-data-scientists to develop predictions using custom fields. Use cases are limitless, but popular use cases are likely to include cross-sell/up-sell, churn, CSAT and propensity-to-escalate analyses. Prediction Builder will be powered by the same machine-learning data pipeline that handles millions of Einstein predictions per day, but it will be opened up – starting with a February beta release and likely June general release – to custom fields and objects in Salesforce. Pricing has not been finalized.

Einstein Bots: Salesforce picked up strong natural language understanding and natural language translation capabilities through its 2016 acquisition of MetaMind. Einstein Bots, a second My Einstein feature, will couple these language capabilities with Salesforce data and the Salesforce workflow engine to power automated customer-service agents. The idea is to handle the bulk of the simple, frequent service cases, such as user password resets, while leaving the long tail of complex and infrequent service inquiries to human agents.

As with Prediction Builder, Einstein Bot development will be a no-code proposition. It will start with point-and-click selections and workflow setup and uploading of spreadsheets of sample customer-service interaction text to train the language model. Beta release is expected in February with generally availability to follow in June. Pricing will be announced at general availability, but I expect it to be based on the volume of cases handled over a specified time. The Bots will start with text-based interaction, but voice-based interaction is likely to follow.

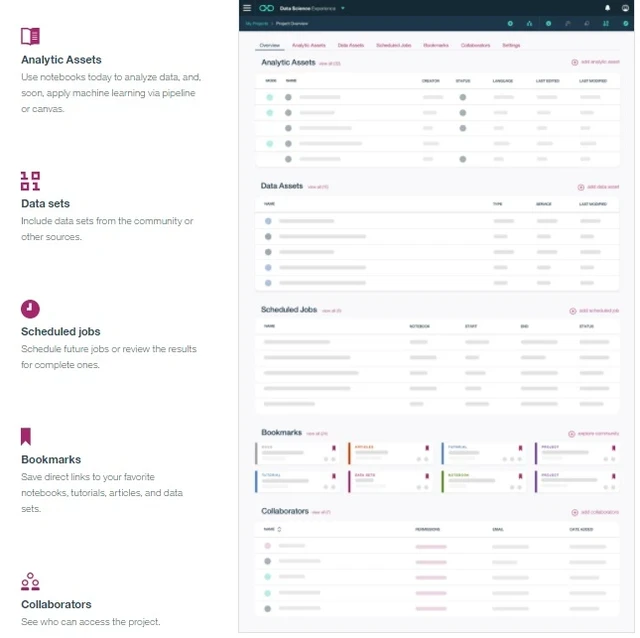

Einstein Data Insights: This new Einstein Analytics (formerly Wave) capability provides deeper insights into standard Salesforce reports from the Sales Cloud, Service Cloud and, eventually, other clouds. Powered by the same engine behind Einstein Discovery, Einstein Data Insights will automatically surface important trends, outliers, changes over time and even data-quality problems within standard reports, displaying a combination of visualizations and textual explanations. Users will press a button embedded on a standard report and the visualizations and textual explanations will appear on the right side of the screen (see image below). This capability is also expected to see beta launch in February with general availability next June. The pricing model has yet to be determined.

Einstein data explorer feature: This capability, which will be included with Einstein Analytics, will let you have "a conversation with your data," says Salesforce, by typing in questions in plain English. Behind the scenes, keyword-driven interpretation will help you drill down on dashboards and visualizations to better understand not just what happened by why it happened. You could drill down on a total figure, for example, by typing “amount by product.” Or you could analyze performance by typing in “lost deals by product.” This feature is expected to be generally available in February.

My Perspective on Einstein’s Progress

As compelling as the coming Einstein capabilities are, the big question on the minds of many customers is “how much will it cost?” It seems we’re still in a chicken-and-egg phase in which both Salesforce and customers are trying to figure out how much Einstein capabilities are worth. Different sorts of predictions and recommendations have different values, depending on the cloud and the types of actions triggered. The size and nature of the customer adds another dimension of complexity, with large enterprises sometimes preferring all-you-can-eat enterprise deals. Salesforce, meanwhile, needs to establish clear revenue expectations to keep its investors and Wall Street happy. Innovation presents its challenges.

Picking up on trends in big data and open-source software pricing these days, one possible pricing approach would be to provide free access to Einstein development tools and a limited number of predictions or recommendations so businesses can get a sense of what they can do. Once the capability is deployed, Salesforce could apply volume-based per-prediction or per-recommendation charges would kick in. In this way, charges would be tied to the value delivered to the customer, although different customers would surely have different perceptions of value, so it might be hard to come up with a one-size-fits-all pricing scheme.

One thing that customers might have found confusing at Dreamforce was the distinction between Einstein and Einstein Analytics (formerly Wave Analytics). There were two separate keynotes at Dreamforce and there are two separate teams behind different sets of Einstein capabilities. But they are both part of one portfolio and a continuum from descriptive and diagnostic analytics to predictive analytics and prescriptive recommendations and actions (as well as advanced language and vision capabilities and APIs for human-interactive applications). Before you can get to the predictive and prescriptive part you need to have good data and reporting in place.

US Bank is using capabilities across the Einstein continuum, and Bill Hoffman, when asked for advice during a keynote, said you have to start with data quality and you have to bring in key stakeholders and risk management and compliance partners from the beginning. In short, don’t expect to get the sizzle of “AI” without addressing the the meat-and-potatoes of data management and baseline reporting and analytics.

The unsung announcements that didn’t get as much attention at Dreamforce included a recent rewrite of the Einstein Analytics engine said to deliver a 30% reduction in data-ingestion and query times. Available data capacity was also more than doubled to 1 billion rows per customer. For easier data loading from external sources, Salesforce has added out-of-the-box data connectors for AWS Redshift, Google BigQuery and Microsoft Dynamics, and more than 20 additional pre-built connectors are to be added over the next six months. Finally, Smart Data Prep capabilities have been enhanced with data profiling, auto clustering, anomaly detection, filtering and transformation suggestions.

These upgrades aren’t the sexy stuff, but they are day-to-day productivity improvements that will help sell customers on handling analytics within Salesforce and advancing to Einstein predictions and recommendations.

Related Reading:

Tableau Conference 2017: What’s New, What’s Coming, What’s Missing

Oracle Open World 2017: 9 Announcements to Follow From Autonomous to AI

Microsoft Stresses Choice, From SQL Server 2017 to Azure Machine Learning