Google Says The Modern Data Stack Breaks at Agent Scale. Here’s Where Google Is Forcing a Rethink.

The modern data stack isn’t failing. It’s succeeding, but the problem has shifted.

It was built to help humans answer questions.

But in an agent-driven enterprise, the problem is no longer answering questions … It’s executing decisions continuously, autonomously, and at scale.

That’s the real story behind Google’s “Agentic Data Cloud” announcements.

Why the Modern Data Stack Breaks

The “modern data stack” (ingest → store → transform → analyze → govern) was optimized for analytics workflows. At agent scale, the analytic workflow assumptions collapse. Many of us feel it. Google just called it out.

- Built for human latency, not machine velocity … at massive scale Dashboards assume a human initiates action. Agents operate continuously/proactively … monitoring, deciding, and acting in real time across a fleet of thousands of agents.

- Separation of insight and execution Analytics systems “think.” Operational systems “act.” Agents require both in real-time.

- Data without meaning breaks automation Humans infer context. Machines require explicit understanding of definitions, relationships, and constraints to make decisions that can be trusted to act/automate.

- Fragmentation becomes a scaling tax. “Best-of-breed” solutions and “walled-garden” data stacks become a liability when costs grow non-linearly, governance fragments, and integration becomes brittle.

What made the modern data stack attractive (e.g., modularity and flexibility) is exactly what makes it fail at agent scale.

What Google Just Announced

Google didn’t introduce a new platform from scratch.

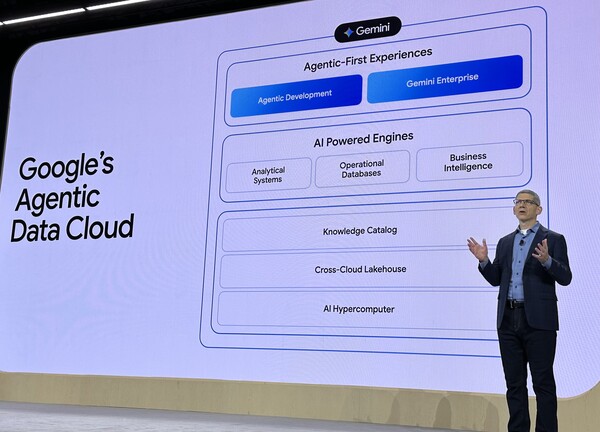

They reassembled their data + AI stack and introduced key primitives to address the shortcomings of the Modern Data Stack → the Google Agentic Data Cloud (diagram below).

NOTE: for the 100’s of other announcements, check out Google’s release blog here.

1. Agentic-First Experiences (build and consume agents): Where agents are created (Agentic Development) and used (Gemini Enterprise)

- LookML Agent + BigQuery Measures auto generates LookML semantics from document and models, while embedding business metrics directly into BigQuery,

- Data Agent Kit Moves development from pipeline engineering → intent-driven orchestration.

Addresses the LookerML semantic cold start to assign data meaning, manages semantic drift, and reduces agentic developer management overhead and bottlenecks. Expect to still need custom logic, guardrails and governance.

2. AI-Powered Engines: Existing data cloud foundation, from analytics (BigQuery, Dataflow, etc), operational systems (AlloyDB, Spanner, etc), to BI (Looker) positioned as the execution backbone.

- Tightened integration between analytics and operational systems to drive execution

- Smart Storage automatically tags, structures, and relates unstructured data (e.g., documents, images) as it lands in storage, generating schemas and relationships.

- Performance improvements for continuous workloads (e.g., Spark acceleration, BigQuery scaling)

Ensures ability to trigger execution, complete as possible data for agent grounding and decisioning, although integration depth uneven across the engines..

3. Knowledge Catalog (key new addition): A unified layer continuously aggregating definitions, relationships, and usage patterns with access-aware retrieval.

- Built on LookML + Dataplex

- Hybrid search (semantic + keyword) with policy enforcement

- Continuous enrichment of relationships and usage

This solves the lack of relevant business context for agents. Attendee conversations reflected strong interest in unifying definitions across systems, recognizing Google’s strong start, but noted the need for process-awareness and agent execution governance.

4. Cross-Cloud Lakehouse (open data layer, Iceberg + Federation): Federation across Snowflake, Databricks, AWS using Iceberg and open formats to enable zero-copy access, govern and act on data without centralizing to BigQuery. Cross cloud support has dramatically improved, but still plan for potential tradeoffs in performance and latency across heterogeneous environments.

5. AI Hypercomputer (infrastructure): Google’s leading-edge approach to integrated compute (new TPUs v8 optimized for training and inference), storage, networking, models and software co-optimized across those traditional boundaries to deliver on agent-scale compute and latency economics that are hard to beat.

Bottom line: Google is saying you need a system that supports the exponential growth of agents/AI-driven decision automation by delivering: 1). continuous, event-driven system that can work autonomously, 2). closed loop between reasoning and action that learns over time, 3). shared runtime context to bridge data to knowledge to trusted actions, and 4). a highly performant, cost-efficient integrated + federated (read open) architecture.

Individually, these areas and the ideas aren’t new.

Together, they represent Google’s redefinition of the data stack, and how Google will differentiate from the other hyperscalers and data platforms.

Implications for data + AI leaders

The shift from Semantics → Context

Even as most data + AI leaders are already familiar with “context” for AI-driven decisioning, most enterprises still frame the agent problem as a data → AI problem, or even an analytics → agent problem. In practice, what agents need is semantics → context.

Semantics grounded the last generation focused on insights:

- What data means

- Metrics, dimensions, relationships

Google is tackling the areas of context providing knowledge and grounding to agents to enable them to act predictably:

- What matters in this situation

- Cross-system relationships

- Usage patterns

- Access constraints

Understanding context also means drilling into how different vendors define the word.

Context already advancing to process + governance of action

Constellation Research sees leaders and fast-follower enterprises expand context to deliver on key business processes to enable the trusted scale up of AI-driven decisioning and agents. Understand your use case and what type of context you need.

- Operational context: What is happening right now? (state, workflows, dependencies)

- Process awareness: What step am I on? What should happen next? Not just what, but why did something happen in a business process?

- Governance of action: What is allowed vs not allowed?

We are moving from systems that tell agents what data means and how to calculate it … to systems that support reasoning on why something happened and what actions they’re allowed to take.

The hidden … not so hidden tension: Open vs Optimized

Google’s approach introduces a new tradeoff enterprises haven’t fully internalized, but are increasingly faced with … vertically integrated stacks spanning infrastructure to agents. Yeah, more than one vendor wants to be Apple.

On one side, Google is (and has for a long time) pushing openness: cross-cloud federation, Iceberg-based architectures, Open Semantic Interface (OSI), support for protocols like A2A + MCP (Google has seen 20X MCP usage growth just in the last 6 months) … just to name a few.

On the other, especially given the lack of maturity across the agentic stack, Google is vertically optimizing for simplified governance, increased performance and lower cost needed to support agent scale and low latency reasoning and execution via co-optimization/integration across infrastructure, models, software, and data.

These are not fully compatible goals.

- Openness enables flexibility and interoperability

- Optimization enables performance and control

And in customers conversations at Next,

Openness wins the architecture conversation Optimization wins at runtime.

In the agentic era, runtime is where value is created alongside sensitivity to the rising cost of tokens, and projected exponentially rising and compounding enterprise use . That means enterprises will need to decide where they are willing to compromise on potential lock-in.

From Systems of Intelligence to Systems of Action

Google is asking us to step back and see this launch not just as a technology shift, but an architectural one.

The last decade focused on building systems of intelligence: to understand what happened and surface insights and predictions.

The next phase is about systems of action:

- Platforms that decide and execute in real time (see my report on Decision Centric Architectures)

- Systems that close the loop between signal and outcome

This changes what matters. Instead of optimizing for query performance, dashboard adoption, and data accessibility. Organizations must now optimize for:

- Decision latency

- Execution accuracy

- Closed-loop learning on impact

MyPOV

We are maturing as an industry: The stance of the leadership team reflects the rising Google, and industry, maturity around AI-driven decisioning and agentic architecture needs. Last year, Thomas Kurian took the stage, and surprisingly to those who have known him over the years, smiled. He laid out his vision and had clear momentum with a parade of early customer stories. This year was different. Thomas smiled again (the fact we count smiles is telling), had even more customer stories, but what was different was the shift from tight vision to the comfort and confidence the Google team had in telling HOW they were helping customers leverage AI to drive outcomes.

Google named the failure point: the modern data stack doesn’t break at analytics. It breaks at execution. Over the last couple years, the industry has been adding on tools to support AI adoption. Google calling out the coming failure of data+AI foundation “bolt-ons” to effectively scale.

Google repositioned themselves: Google Data Cloud should no longer be evaluated as a warehouse/analytics platform. After Next ’26, the right lens is whether Google can become an autonomous decision infrastructure platform: data + context + agents + execution + governance. This puts them as one of the few hyperscales who directly compete with Databricks and Snowflake.

Built atop their strengths:

- Full-stack ownership (infra → model → data)

- Strength in unstructured data + AI/ML

- Less legacy fragmentation vs competitors

But but but, enterprise leaders have to separate “semantic grounding” from full decision governance. Google delivered on semantic definitions, relationships, usage, metadata, access-aware retrieval, but both Google and the market is still early on operational context: process state, decision rights, workflow constraints, and auditability of actions. Operational context means addressing the enterprise reality of messy, multi-system environments, and bridging Google’s gap of not owning the application/workflow layer that has rich operational context. And context without operation context (process, state) and governance = incomplete control.

Questions for Data + AI Leaders

This is not about adopting Google’s stack. It’s about rethinking your architecture.

Three questions matter now:

- Where does context live? Is it formalized, governed, and scalable … or fragmented across teams?

- Who owns execution? Are your systems informing decisions—or acting on them?

- How much fragmentation can you afford? At agent scale, every integration becomes a tax.

These are not incremental decisions. They will define how your organization operates in an agent-driven world.

Final Thought

The modern data stack optimized for access to data.

The next generation will be defined by how effectively it turns that data into decisions and actions at scale. Contextualizing more data and signals, managing context as a workflow, governing fleets of agents … Google is naming this as the shift from systems of intelligence to systems of action.

Expect the rest of the market to quickly respond.

What did I miss? Comments? Love to hear your thoughts 👇🏻